L&D leaders face a clear shift. AI literacy training now sits at the centre of workplace capability, not on the edge. Teams already use AI tools every day, but many organisations still rely on informal learning, which creates risk and limits value. Because of this, leaders need a practical, structured approach that supports adoption and protects the business.

Why AI literacy suddenly matters more

AI literacy has moved up the agenda fast, and for good reason.

- Policymakers continue to warn about the UK’s widening AI skills gap, so pressure on employers is rising.

- Public leaders now frame training as a response to job disruption and entry-level role risk.

- EU-facing organisations must meet AI Act expectations around staff AI literacy, especially for tool users.

These signals all point in one direction. Organisations must treat AI skills as core workforce capability, not optional development.

What AI literacy really means at work

AI literacy does not mean turning everyone into a data scientist. Instead, it means giving people the right level of understanding for their role.

For most organisations, responsible AI at work starts with three role-based levels:

- All staff, awareness of AI limits, risks, and everyday safe use

- People managers, confidence to guide use, spot risks, and escalate issues

- Specialists, deeper knowledge of data, models, and governance

This structure keeps learning relevant, and it avoids overwhelming learners with content they do not need.

The six-lesson AI literacy core curriculum

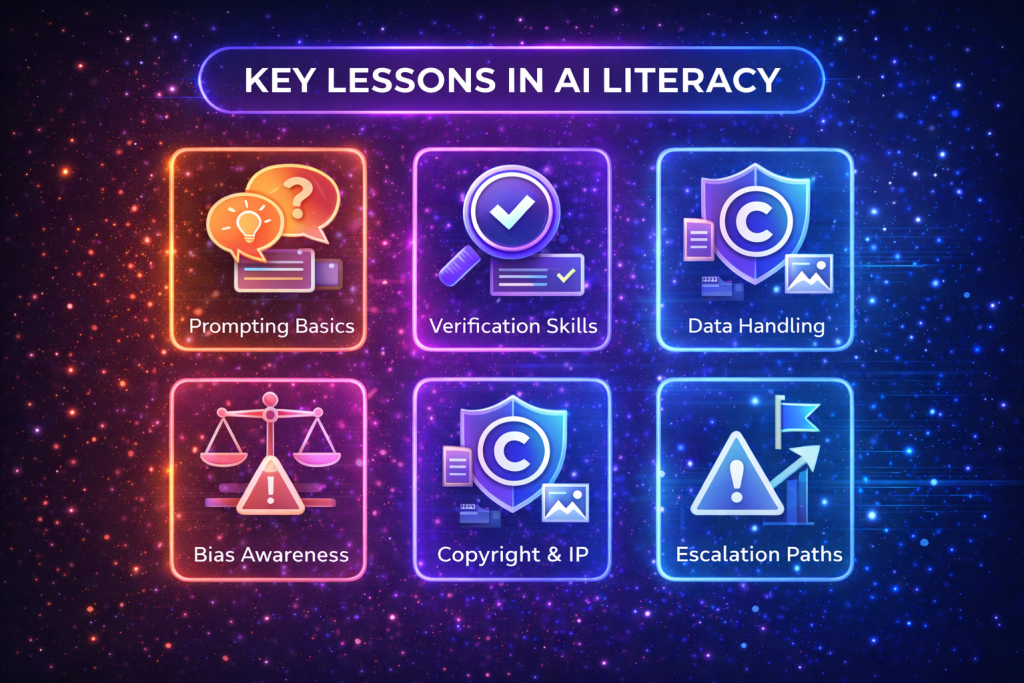

Strong AI literacy training focuses on practical behaviour, not theory. Many programmes now centre on six essential lessons.

- Prompting basics, how to ask better questions and refine outputs

- Verification skills, checking accuracy and spotting hallucinations

- Data handling, what can and cannot go into AI tools

- Bias awareness, understanding where AI can reinforce unfair outcomes

- Copyright and IP, knowing what risks exist around generated content

- Escalation paths, when and how to raise concerns

Together, these lessons reduce risk and build confidence, so staff use AI productively.

Governance that supports adoption, not fear

AI governance often fails because it blocks momentum. Effective AI governance takes a different approach.

- Publish a short list of approved tools and use cases

- Share clear, simple safe-use guidelines

- Build audit trails into workflows where possible

This balance allows teams to move fast, but safely. It also gives leaders visibility without constant policing.

Learning AI skills in the flow of work

Formal courses help, but they are not enough. Workforce upskilling works best when learning fits daily work.

Successful organisations often add:

- Micro-coaching moments inside tools

- Prompt and workflow templates

- Regular office hours with AI champions

- A community of practice to share examples

These tactics normalise learning and keep AI literacy alive beyond launch.

Measuring whether AI literacy is working

L&D leaders need proof of impact. Fortunately, AI literacy lends itself to clear signals.

Common measures include:

- Adoption rates of approved tools

- Reductions in errors or rework

- Faster cycle times on key tasks

- Improved confidence scores in surveys

- Fewer AI-related risk incidents

Tracking these metrics shows where learning supports performance and where it needs adjustment.

Where JLMS Cloud fits

JLMS Cloud supports structured, role-based AI literacy training that scales. You can deliver modular learning, track adoption, and align AI skills with governance requirements. Because learning sits in one platform, L&D teams gain insight without adding friction for learners.

AI literacy is no longer optional. Organisations that act now build confidence, reduce risk, and unlock real productivity gains.

Sources:

https://www.techradar.com/pro/the-key-to-the-uks-ai-success-lies-in-closing-the-skills-gap

https://digital-strategy.ec.europa.eu/en/faqs/ai-literacy-questions-answers

Discover more from JZero Solutions

Subscribe to get the latest posts sent to your email.

No responses yet